The Average Path

Why Coding Agents Will Write the World's Most Mediocre Software — And Why That's Not the Story You Think It Is

I love coding agents.

Let me say that again, because what follows might suggest otherwise. I love coding agents.

I have produced more functional, tested, demonstrable software in the last two months than I have in the last two years. Maybe longer. The velocity is intoxicating, and I don’t use that word lightly — I use it because intoxication impairs judgment, and we should probably talk about that.

Here’s what happened. Twenty-plus years of building systems — memory mapping, streaming architectures, threading models, distributed consensus, the whole cathedral — suddenly became a lever instead of a résumé line. All that accumulated intuition about how computers actually work underneath the abstractions?

It became the difference between using a coding agent and being used by one. I could push fearlessly into designs that would terrify someone who learned software from a textbook, because I’d already broken these things with my hands and put them back together in the dark.

It has been, without exaggeration, extraordinary.

But.

There’s a structural problem with large language models writing software, and it’s not the one the doomers talk about, and it’s not the one the boosters dismiss. It’s more fundamental than either camp wants to admit, and it hides in plain sight inside every line of code these models generate.

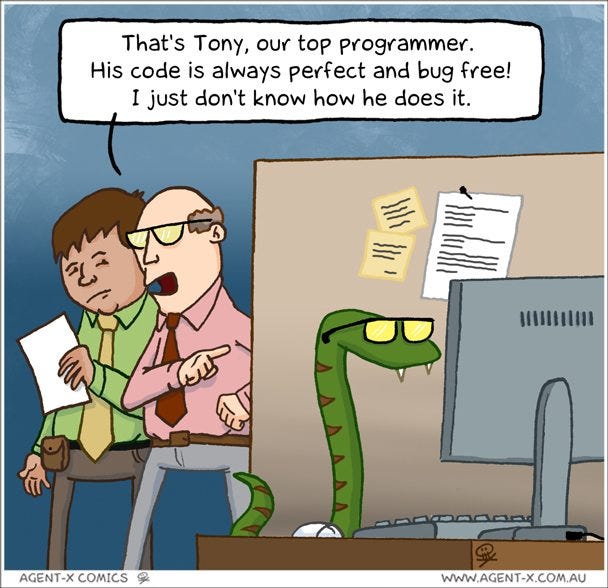

The LLM chooses the average path.

Every design decision, every architectural fork, every moment where a system could go left toward elegant efficiency or right toward “good enough” — the model goes right. It goes right because it was trained on the corpus of all software ever written, and the corpus of all software ever written is, to put it charitably, not great.

This isn’t a bug. This is thermodynamics.

The model doesn’t optimize for speed. It doesn’t optimize for efficiency. It doesn’t think about memory hierarchies or cache line boundaries or what happens when your dataset outgrows the machine you’re sitting at. It can’t Think Big — capital T, capital B — because thinking big requires the willingness to throw away the obvious approach in favor of the non-obvious one, and non-obvious approaches are, by definition, underrepresented in the training data.

What the model actually does is produce the solution that the largest number of software engineers would have written. Which sounds fine until you remember what the distribution of software engineering quality looks like.

Let me make this concrete, because concrete is where the bodies are buried.

I needed to load a large dataframe stored locally in Arrow format. Arrow — for the uninitiated — is a columnar, in-memory format designed for analytical workloads. It’s fast, it’s compact, and it scales beautifully if you know what you’re doing.

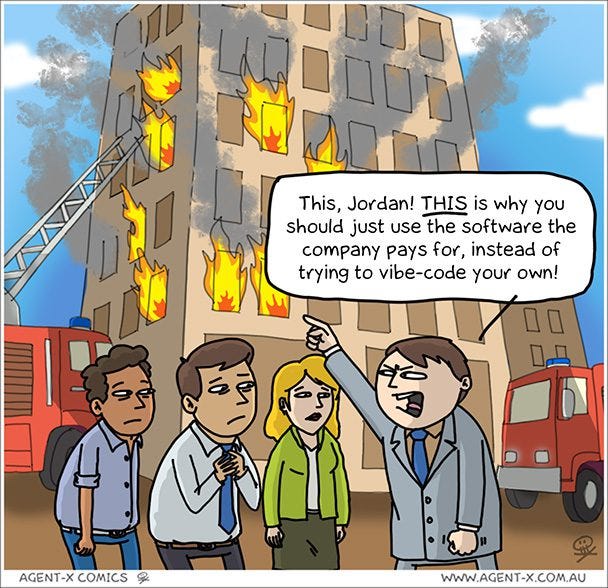

The coding agent loaded the entire file into RAM.

Now, most software engineers would do the same thing. Open the file. Read it into memory. Operate on it. This is the canonical approach. It’s in every tutorial. It’s on every Stack Overflow answer. It is, indisputably, the average path.

It also means your application scales linearly with file size, and your ceiling is whatever physical RAM happens to be installed in the machine. Which isn’t an engineering decision — it’s a surrender.

I knew immediately what to do: memory-mapped I/O. Let the operating system’s virtual memory subsystem handle the paging. Break the coupling between file size and available RAM. The data lives on disk; the OS pages in what you need, when you need it, and pages out what you don’t. No performance degradation compared to a full in-memory read. Works beautifully in streaming contexts. Saved me — and this is not hyperbole — tens of thousands of lines of code that would have been required to implement an inferior, disk-bound alternative format.

But here’s what was actually concerning: the model didn’t just miss this approach. When I pushed toward it, the model pushed back. For a surprisingly long time, it tried to convince me — with the confident, measured tone of a senior engineer who’s seen this movie before — that we were physically bound by available RAM. That this was simply a constraint of the problem. That perhaps we should consider alternative file formats.

It was giving me the median Stack Overflow answer with the conviction of someone who’d never questioned whether the median Stack Overflow answer was any good.

And this is where we need to talk about the distribution.

There’s a well-known empirical observation in software organizations: your best software comes from roughly ten percent of your talent. This isn’t controversial. This is why every FAANG company runs a brutal calibration process. This is why performance reviews exist. This is why the difference between a Staff Engineer and a Senior Engineer isn’t just seniority — it’s a fundamentally different mode of thinking about problems.

Which means that roughly ninety percent of the training data for these models — the code, the Stack Overflow answers, the blog posts, the documentation, the architectural decisions captured in a million repositories — comes from engineers operating below the level where the really interesting design decisions get made.

The model isn’t trained on the ten percent. The model is trained on the average of the whole distribution.

So when a coding agent writes your software, it writes it the way the median engineer would. Not the worst way. Not the best way. The most common way. And the most common way is how you get systems that work fine at demo scale and collapse under production load, that pass every test and fail every stress test, that solve the problem stated in the ticket and miss the problem lurking underneath it.

Now, someone in the back is already raising their hand.

“But compute is cheap. RAM is cheap. Why does this matter?”

It matters because you don’t live in a cloud provider’s marketing brochure.

I have watched — personally, with my own eyes — hardware bottlenecks kill enterprise systems. Not “slow them down.” Kill them. And here’s why: the vast majority of enterprise software is still not fully CI/CD. It’s not cloud-native. It’s not running on immutable infrastructure with autoscaling policies and graceful degradation. It’s running on machines that a human being provisioned, that another human being patched last Tuesday, that a third human being will troubleshoot at 3 AM when the OOM killer starts making editorial decisions about which processes deserve to live.

RAM is free in the abstract. In the enterprise, RAM is a purchase order, a change request, a capacity planning meeting, and a six-week procurement cycle. And I’m not yet convinced that CIOs are ready to hand security, data quality, preservation, and availability decisions to AI agents. That’s not conservatism — that’s pattern recognition.

Which brings me — finally, stay with me — to the thesis I’ve been circling.

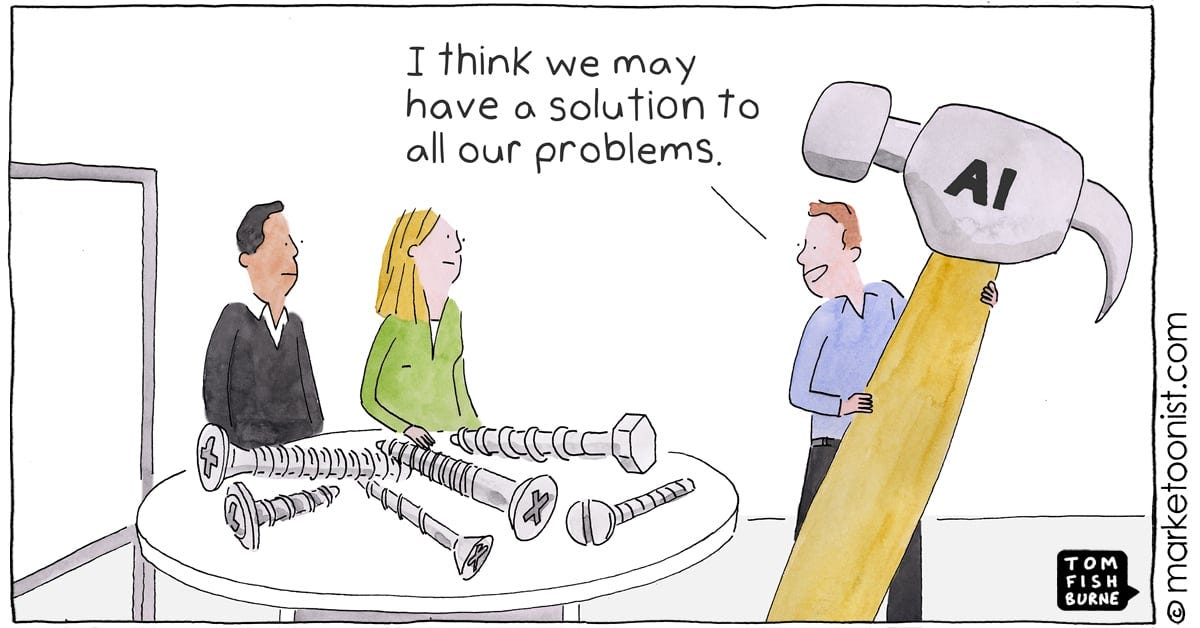

What if I’ve (we’ve) fundamentally misunderstood what the AI revolution is?

I’ve sat through enough keynotes and earnings calls and breathless LinkedIn posts to have internalized the dominant narrative: AI will replace human workers. AI will do the work of ten people.

AI will reduce headcount and increase margin and your board will love you for it.

And I’ve heard the counter-narrative from every AI CEO with a product to sell: “We’re not replacing humans! We’re augmenting them! We’re helping people do things they couldn’t do before!” Which always sounded like marketing horseshit. The kind of thing you say to a Senate subcommittee while your sales team is pitching CFOs on headcount reduction.

But what if they’re telling the truth? What if they’re being quietly, almost accidentally honest about the real economic model?

Because here’s what I’ve actually experienced, not theorized: the value of coding agents is not evenly distributed across the talent spectrum. The value is catastrophically concentrated at the top. When someone with twenty years of systems intuition uses a coding agent, the agent becomes a force multiplier of staggering magnitude. When someone without that intuition uses the same agent, they get the average path. Faster, yes. But still average. Still fragile. Still doomed to the same scaling failures and architectural dead ends that characterize the bottom ninety percent of all software ever written.

The real TAM isn’t built on replacing human salaries. The real TAM is built on making the top ten percent of talent capable of producing the entire output of the remaining ninety percent.

Read that again.

This isn’t about replacement. This is about concentration. The best minds, paired with models, doing things that were historically impossible not because the work was too hard — but because there weren’t enough hours in the day and there was too much organizational overhead between the idea and the artifact.

And this is where it gets uncomfortable.

Are we ready for a world where ten percent of the workforce delivers the entire value of the other ninety? Because that’s not a labor market adjustment. That’s a structural transformation of how organizations create value, how careers are built, how compensation is distributed, and who gets to participate in the upside.

Not every company can afford the top ten percent. Not every company can even identify the top ten percent. And the top ten percent has a very specific characteristic that is extraordinarily difficult to teach: they have an intuitive, experiential understanding of how systems actually work — not how textbooks say they work, not how certification programs describe them, but how they behave under real conditions at real scale.

Note to AI investors: The total addressable market (TAM) of most AI initiatives has a secret assumption in replacing human productivity. With our current model architectures, this TAM can only be realized if the customer will consistently pay for an average outcome, unless it is simultaneously combined with extraordinary human talent or tools encoded with this human talent.

I’m self-taught. Every concept I carry — memory mapping, streaming, threading, distributed failure modes — I learned by breaking things and fixing them, by reading source code at 2 AM because no documentation existed, by building systems that fell over and figuring out why. That knowledge lives in my hands, not in my head. It’s instinct, not information.

And I genuinely worry that the current state of computer science education — which teaches concepts through textbooks and problem sets rather than through the kind of reckless, fearless, self-directed exploration that builds intuition — is producing engineers who can describe these concepts but can’t feel them.

And if you can’t feel them, you can’t evaluate the coding agent’s output. You accept the average path because you don’t know there’s a better one.

The traditional work breakdown of software organizations doesn’t make sense anymore. The old model — decompose a project into small tasks, assign each task to an individual contributor, roll up the results — was designed for a world where humans were the unit of execution. Coding agents are better at bigger tasks with defined, testable outcomes than they are at incremental improvements to existing systems. They want scope and direction, not tickets and story points.

The common units of scaling teams - “one engineer can handle three customers, so when we get 30 customers, we need 10 engineers” - just doesn’t compute anymore. It’s a non-linear distribution, not evenly exponential either. Now you may need talent that is five times more expensive to handle twenty customers. But is that extra customer per dollar spent worth it? And then there is the chance that the expensive talent will create an innovation that attracts another 20 customers that you would not get without the talent - a hidden opportunity leverage (or cost).

This suddenly became an extremely complicated ROI decision for the economic buyer, and the TAM of an AI company highly questionable.

Which means the person directing the agent needs to operate at the level of the whole problem, not the level of the individual function. They need to see the entire system in their head, understand where the scaling walls are, know which “best practices” are actually just popular practices, and have the confidence to override the model when it’s walking them toward a cliff.

That’s not a junior engineer’s skill set. That’s not even a senior engineer’s skill set, in many organizations. That’s the skill set of someone who’s been through enough wars to know the difference between the way software is supposed to work and the way it actually does.

So here’s where I land, and I know this will make people uncomfortable.

I haven’t seen LLMs break through to doing the work of the top ten percent natively. And mathematically, that makes perfect sense — you can’t train your way to the tail of the distribution when your training objective optimizes for the center of it.

What I have seen is the top ten percent using LLMs to do things that previously required an army.

The AI revolution isn’t a replacement algorithm. It’s an amplification function. And like all amplification functions, it amplifies whatever signal you feed into it. Feed it mediocrity, you get mediocrity at scale. Feed it brilliance, you get brilliance at a velocity that was previously unimaginable.

The question isn’t whether AI will change how software is built. It already has. The question is whether we’re honest about who it changes things for — and what happens to everyone else.

Because the average path, at scale, doesn’t lead anywhere good. It never has.