The AI Industry Doesn't Know What Memory Is. And That Should Terrify You.

We solved compute. We're figuring out storage. But memory? We haven't even started asking the right questions.

Let me tell you something about memory.

Tomorrow I turn 45. But almost as though it is yesterday, I can remember watching my mother make carrot halwa on a stove. I don’t remember the date. I don’t remember what the occasion was - other than it was my birthday. But I do remember the smell of the heavy whipping cream getting boiled, the plain scent of carrots being boiled in it, turning sweet as pectin was released, and the incredibly patient, deliberate way that my mother kept turning the frothing, boiling mixture - like a surgeon who’d done this ten thousand times and intended to do it ten thousand more. She was playing the interesting yet dangerous game of deciding how high the temperature could be to maximize pectin release, while avoiding risking burning the family’s yearly budget of half and half, and completely ruining her kid’s birthday.

Now here’s what’s interesting. I haven’t thought about that moment in years. But last week, I watched an engineer debug a failing orchestration pipeline with that same deliberate stillness, and the memory surfaced — unbidden, recontextualized, useful. Not as a transcript of what happened in 1988, but as a framework for understanding patience under technical duress in 2026. Semantically there is absolutely no relationship between making halwa and CI/CD pipelines. In fact, personally I have to wonder why I have made a relationship between something sweet and something so sour, but I digress.

But that’s also human memory. And the essence of where ideas come from, whether it was Einstein’s thought experiments or my designs on eating carrot halwa.

You know what isn’t memory? A markdown file.

The Great Conflation

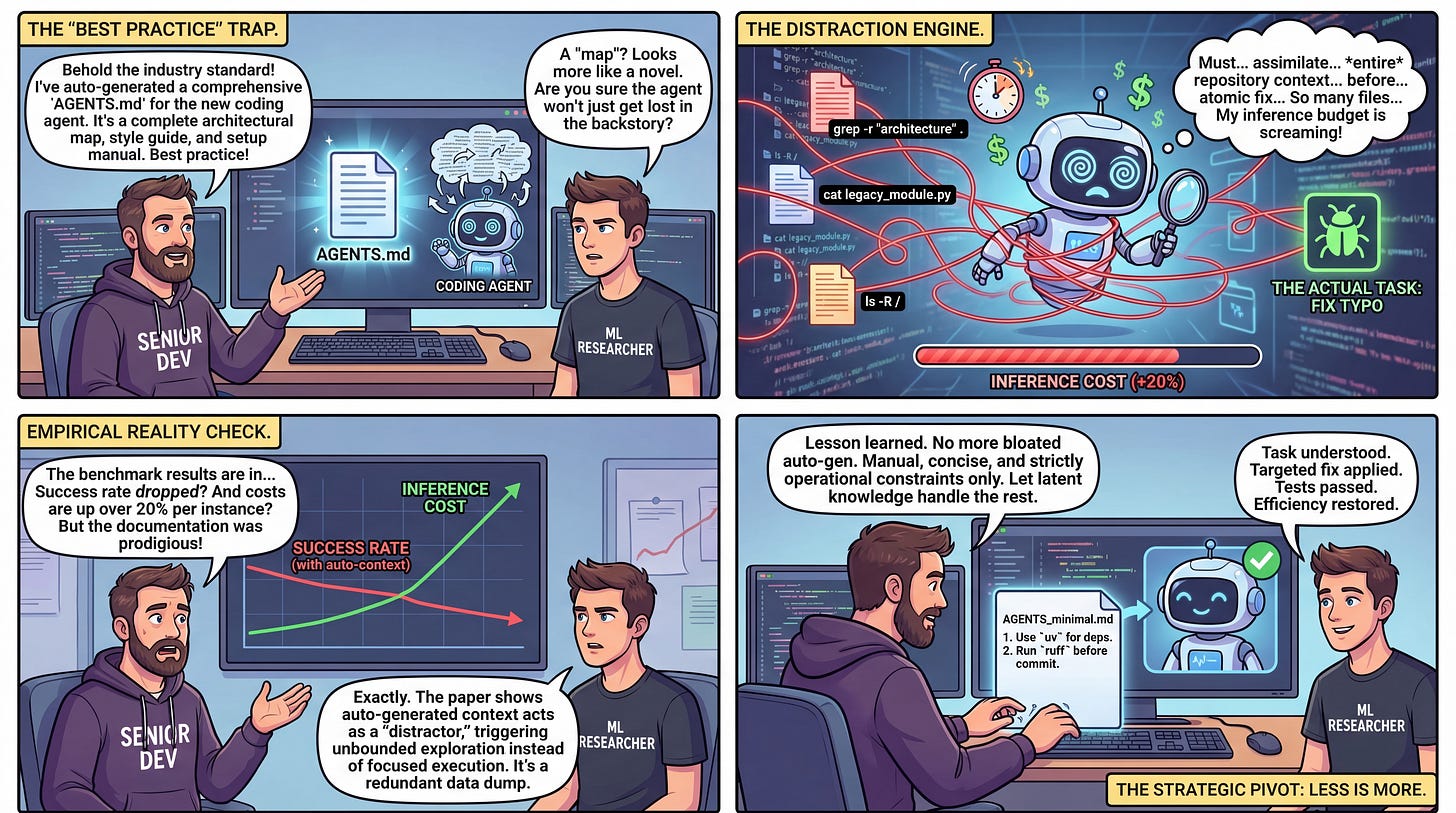

There’s a pattern emerging across the AI agent ecosystem that I find — and I’m going to be charitable here — intellectually troubling. Agent frameworks are creating files called MEMORY.md, stuffing them with compressed observations, and then declaring the memory problem solved.

Some have gone further: agents now steal each other’s memory files to bootstrap their understanding of a project, a codebase, an initiative. Others throw task IDs against these memories and consider them “organized.” The really clever types put a human ID on the memory - but a human ID doesn’t make it human. Nor does it build relationships.

We even see Claude Code do it with it’s clever marketing positoning: “saving a memory for later now.” I went and read these memories… they’re a bad example of semantic state.

And the industry is nodding along like this is progress.

It isn’t progress. It’s a category error. And category errors, left uncorrected, become architectural debt that compounds until the whole edifice collapses under assumptions nobody bothered to challenge.

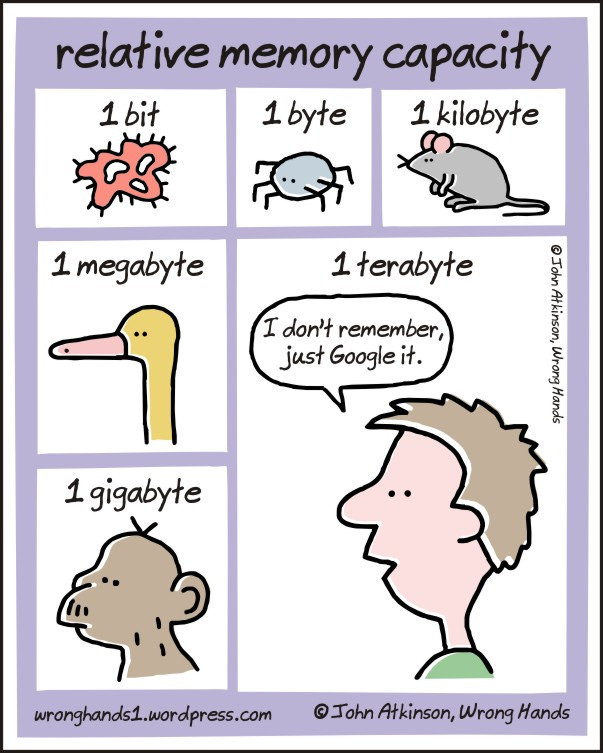

What these systems have built is state. Persistent state. Serialized state. Occasionally useful state. But state is not memory, and the difference isn’t semantic — it’s structural, computational, and, I’d argue, fundamental to what we’re going to need if we want agents that actually reason across time.

State vs. Memory: A Distinction That Matters

Let’s be precise, because precision matters here.

State is a snapshot. It’s a data structure that captures the current known values of a system at a point in time — or, in the case of

MEMORY.md, a lossy compression of accumulated observations flattened into prose. State answers the question: what do I currently know? State is flat. State is inert. State sits there waiting to be read.

Memory is a process. It’s reconstructive. It’s contextual. It’s an active computation that, given a retrieval cue, searches across episodic and semantic stores, identifies associatively relevant experiences, and synthesizes a novel summary shaped by the context of the current inquiry. Memory doesn’t answer what do I know? — it answers what is relevant to what I’m thinking about right now, and how should I frame it?

This is not a subtle distinction. This is the difference between a filing cabinet and a research assistant.

To be clear, we encode memories multiple times per event, and then re-encode it as new memories occur. This is why eye-witness testimony is rarely reliable.

When a human talks about the current geopolitical turmoil in Iran, they might recall the Iraq War — not because someone filed “Iraq War” under the heading “Middle Eastern Conflicts to Reference Later,” but because an associative retrieval process found episodic traces that share semantic proximity with the current conversational context, and then reconstructed that memory with emphasis on the dimensions that matter right now. The memory of the Iraq War surfaces differently when you’re discussing Iranian diplomacy than when you’re discussing veterans’ healthcare. Same underlying episodes. Different reconstruction. Different utility.

A MEMORY.md file cannot do this. It was compressed once, with one frame, at one moment. The grain is gone.

The Compression Trap

Agent authors — smart, well-intentioned people — will push back here. They’ll say: “When we read MEMORY.md, we’re reading it within a conversational context. The LLM reinterprets the content dynamically.”

Yes. And this is where we need to be honest about what’s actually happening.

When you write an observation into a memory file, you’re performing lossy compression. You’re taking a high-dimensional experience — the full context of an agent’s interaction, the decision tree it traversed, the alternatives it rejected, the uncertainty it felt — and you’re flattening it into a sentence or a paragraph. You’re discarding the dataframes. You’re collapsing the probability distributions into point estimates. You’re throwing away the episodic richness that would allow for flexible reconstruction later.

And then, yes, when you read that compressed artifact back in a new context, the LLM does what LLMs do — it interpolates. But interpolation over compressed data isn’t memory retrieval.

It’s hallucination with guardrails.

You’ve lost the grain, and no amount of contextual reinterpretation can recover information that was discarded at write time.

This is, if I may borrow from information theory, the fundamental problem: you cannot reconstruct what you did not retain. Claude Shannon told us this in 1948. We should probably listen.

Now let me be clear. The word hallucination has become a dirty word. By definition, when we read this now in the context of the AI industry, we all immediately go: “AI wrong, human good.” But I actually disagree. Hallucinations are also the origin of some amazingly good ideas that change human history.

Albert Einstein’s “thought experiments” are glorious hallucinations built on logic that happened to match the fabric of the universe.

So not all hallucinations are bad. The problem though is that the AI industry needs to lean into this by creating multiple forms of memory, often indexed across many different temporal, semantic, reasoning, and spatial contexts, with different levels of “temperature” in order to achieve the same gradient as true memory and concept linking.

If you are about to tell me this feels a bit like the traveling salesman problem on steroids, you’re right.

The worst part is that the tokens in true memory aren’t just semantic. They’re temporal and spatial as well. While you can see OpenAI and Anthropic attempt to bolt on specialized heads (or subordinate task models) to bring these type of tokens to their systems - they are still a second order concept. The primary is language, in the theory that language can encode everything.

Yes, language can encode everything with enough tokens. But that’s kind of like Fred Flinstone saying he can get anywhere on a car driven by his legs.

Let’s encode the image frames for that Zoom call you’re about to have on Monday using… language.

The Three-Pillar Problem

Here’s how I think about the AI systems landscape, and I think this framing might be useful.

In the early days of cloud computing, we understood the problem as three pillars: compute, storage, and database. You needed processing power. You needed a place to put bytes. And you needed a structured, queryable system that could organize, index, and serve data with semantic awareness. These weren’t the same thing. EC2 wasn’t S3 wasn’t RDS, and collapsing any two of them would have been architectural malpractice.

I kind of feel that AI has the same tripartite structure:

Compute → Models. The LLMs themselves. The inference engines. We’ve made extraordinary progress here. GPT-4, Claude, Gemini — these are remarkable machines for transforming input into output. Compute is not our problem.

Storage → Context. The context window. RAG pipelines. Document retrieval. Vector stores. The mechanisms by which we surface information into the model’s working set. We’re getting better at this. Context windows are expanding. Retrieval is improving. We’re not done, but we’re on a trajectory.

Database → Memory. And here’s where we have a gaping hole.

We don’t have a memory system for AI agents. We have state files masquerading as memory. We have append-only logs. We have compressed summaries. What we don’t have is anything resembling what a database provides in the cloud computing analogy: a structured, indexed, queryable system with its own internal ontology, capable of associative retrieval, contextual reconstruction, and multi-resolution access to historical experience.

Nobody has built RDS for agent memory. We’re all still writing flat files and calling it a database.

To quote Corey Quinn, we’re using S3 as a database and calling it relational.

What Real Agent Memory Would Require

So what would it actually take? Let me sketch the architecture, because I think this is where the industry conversation needs to go.

An episodic store with high-dimensional retention. Don’t compress at write time. Store the full episode — the context, the decision, the alternatives, the outcome, the uncertainty. Store it at the resolution you’d need to reconstruct it flexibly later. Yes, this is expensive. Yes, storage is cheap. Make the trade.

A semantic association layer. Not just vector embeddings (though those are part of it). A genuine ontological structure that captures relationships between episodes — causal links, temporal sequences, thematic clusters, contradiction graphs. When an agent remembers, it shouldn’t be doing a flat cosine similarity search. It should be traversing a knowledge graph that encodes how its experiences relate to each other.

Before people start spamming me with Neo4J articles and Cypher queries, I’m not sure graph databases are a good thing for this. Why? Because this association layer has to be continuously encoded to work right. In other words, you are constantly re-encoding the associations and ontology based on incoming encounters, queries, and memories of these encounters and queries. And then storing it at various different temperatures to decide what version should be recalled for a court room or a bar. Graphs - and the query mechanisms that come with them - are too rigid for these encodings.

Contextual reconstruction at read time. This is the critical piece. When an agent retrieves a memory, it shouldn’t get back a pre-written summary. It should get back the raw episodic material, filtered and weighted by the current retrieval context, and then generate a novel synthesis that’s shaped by what it’s trying to do right now. The same underlying memory should produce different outputs when retrieved for different purposes.

Multi-resolution access. Sometimes you need the gist. Sometimes you need the detail. A real memory system would support queries at multiple levels of granularity — from “what’s my general experience with microservice architectures?” to “what specific failure mode did I encounter in that Kubernetes deployment on March 3rd, and what was the pod configuration?”

Forgetting. This one’s uncomfortable but important. Human memory decays, and that decay is functional. It prevents overfitting to historical experience. It allows generalization. An agent memory system that retains everything with equal fidelity is going to develop the cognitive equivalent of hoarding disorder — unable to distinguish signal from noise because everything was preserved with the same weight.

Information prioritization is actually what often drives innovation. The focus becomes the great discerning lens of creativity, and when this focus matches the focii of others, you maximize general utility.

The Uncomfortable Truth

Here’s the part nobody wants to hear.

Building this is hard. It’s not a weekend project. It’s not a clever prompt engineering trick. It’s a systems architecture problem that sits at the intersection of information retrieval, knowledge representation, cognitive science, and distributed systems design. It requires thinking carefully about encoding, indexing, retrieval, and reconstruction as separate, composable operations — not as a single read/write to a file.

And it requires admitting that what we’re currently doing isn’t working. That MEMORY.md is a polite fiction. That agent memory-sharing is just state replication with extra steps. That the reason our agents can’t learn effectively across sessions isn’t a context window problem — it’s a memory architecture problem. You get cute marketing moments of an agent “remembering a past interaction” - but that’s just the great averaging of interactions extinguishing the spark of human innovation.

A short note to Wall Street doom scrollers…

On a side note, this is where I think the AI doom scrollers on Wall Street get it totally wrong. Without good memory and context systems, which mind you - in my opinion truly haven’t been built yet, we still depend on the good old human to provide the differentiation. And, as we know, we need teams of humans focused on a specific problem to truly drive good ideas - consistently.

Memory helps drive differentiation. And differentiation is required in order to justify the switching cost for a customer.

The Tale of Two Steves

Let’s for example, do a thought experiment the smartphone. Let’s hypothetically consider that we had an agent building smartphones before the iPhone was invented. Let’s call our agent innovator Steve AI. Steve AI is competing with Steve Jobs. Both Steves are competing against powerful consumer switching inertia - the Blackberry with it’s famous keyboard is still really popular, girls love the Motorola Razr.

Steve AI is likely to produce a smartphone, across the thousands of iterations, tool calls, web searches, and market research that is likely to resemble a cross between the Microsoft Zune and the Blackberry. It will retrieve semantic memories and related information based on the vocabulary of digital assistants at the time and consumer reception to them.

Steve Jobs however sets the bar using - at the time - a semantically orthogonal set of concepts. He brings physical textures to a virtual world - glass, haptics, smoothness, and weight. He distills communication techniques - e-mail, phone calls, and messaging into almost a video game style interface. Folks would claim that the analogies he was inventing at the time were fueled by acid. Steve AI would likely call many of his statements hallucinations.

One Steve created differentiation - arguably through some element of hallucination combined with his experience (cough, memory). Even more critically, the humans supporting Steve understood his hallucinations. I argue that it would have taken a fair number of iterations for a reasoning model from today - trained on the knowledge and state of the art from then - to understand Steve Jobs. In fact, there is a strong probability that it would never have understood Steve. Steve Jobs and his team goes on to become obnoxiously wealthy, powered by memory.

Meanwhile, Steve AI creates a product that is - average. How can you blame it? After thousands of roulette spins at the gradient bingo we call LLMs, you end up with an averaging effect. This averaging effect is only to be expected with such a large endeavor. Average doesn’t justify the switching cost to the consumer, even if average comes at 10% of the cost. Steve AI is out of a job.

The Gross Oversimplification

Unfortunately, marketing, product management, and software engineering isn’t just code. Sure, there is some part of the industry which is just that. But I would argue that software that is just valued by the number of nuts and bolts and the output it produces based on a defined input was probably dead before the advent of LLMs. It was just surviving through obscurity, customer switch costs, and inertia.

Does this mean that the mechanisms in how software is built and delivered is not changing? No. It absolutely is changing and software engineers that don’t learn these new mechanisms will fail. But the mechanism is not a proxy for value. At the end of the economic value chain, you still have a human buying something to survive, to entertain themselves, to demonstrate love, and to handle life.

And regardless of the arbitrage and disintermediation introduced by agents, we still have to work backwards from those humans.

Scaling Up

The AI industry has gotten very good at solving the problems it knows how to solve and renaming the problems it doesn’t. We called token limits a “context problem” and then expanded the window and built sledgehammers called compaction. We called inconsistency a “prompt engineering problem” and then wrote better system prompts. And now we’re calling flat files a “memory solution” and wondering why our agents still can’t remember what they learned yesterday in a way that’s useful for what they’re doing today.

The only way to avoid the great averaging effects of AI is to create a memory that has an element of over encoding and hallucinations. But this yields a new set of problems - guardrail failure, memory poisoning, fake news, uneven performance. Basically all the problems AI is supposed to fix in humans.

I visited a good friend of mine in Holland two weeks ago, Rob Francis, the CTO of Booking.com. He was openly speculating about “performance managing AI agents.” I have to admit, he is on to something, and it was a brilliant statement. You can’t say that software engineers are irrelevant and going to be replaced by AI unless you are also in the same breath willing to live with the inconsistency that yields software differentiation in the same industry.

You can’t have the innovation without the inconsistency and failure.

And another hidden - but rapidly emerging problem is token economics. Adding self-encoding memory systems will burn tokens. In a sense, you are constantly fine tuning your model weights, constantly learning. At one point, you begin to wonder what the ROI is on the tokens against just hiring the equivalent human. So let me get this straight - we need to spend more money on tokens to get less predictable outcomes because that is how you get creativity? I dare you to make that argument to the CFO.

This makes me often wonder if the AI industry rushed into monetization too quickly.

It was one thing when the focus was on replacing low skill workflows with AI. This made sense. Humans aren’t the best at performing manual labor and we’re not even using all of the capabilities of their brain, truly tapping into the unlimited potential of our creativity. I’m sorry, but the days of a contact center agent repeating the same script over and over again was numbered, even without AI. The days of a bank teller inspecting signatures was also numbered as well, just from a perspective of security alone!

But in our desire for “super intelligence” or “AGI” - we lurched forward with reasoning models, looking to replace intellectual content with AI, often not considering what made generating this content valuable in the first place. Does Claude Code generate some awesome code for me? Yes. Yes, it does. But guess what? I still have to prompt it to solve problems that I observe. Claude Code without the decades of experience to make good software engineering tradeoffs is not nearly as useful. And here we go - I just used the term - experience.

And I would argue that experience can be quantified and replaced. It can be quantified by spending 100x the tokens we spend today, auto-recursively encoding memories, adding a sprinkle of hallucination, building semantic bridges between unrelated concepts. The salesman can travel to every milestone in the ever winding path of a software architect, spending tokens at every stop.

But making these tradeoff decisions suddenly creates tension with what we typically want from enterprise software: consistency, reliability, safety, and adherence to guardrails.

However, the burn rate dictated monetization. You have to pay for those Super Bowl ads with something other than investor cash, right? Speaking of which - does anybody even remember those anymore? And did that actually cause you to use a service more?

The nascent economics of frontier labs, supposedly founded to fulfill a mission of helping humanity, forced this technology to be launched before building a consensus on this tradeoff.

We need a solution to the memory problem, right?

Compute. Storage. Database. We solved one. We’re working on the second. And we haven’t yet had the honest conversation about the third.

Maybe it’s time we started. Or is it?

As I came to close this post, I struggled with this. The AI industry seemingly likes to talk about itself as though it is going to replace 80% of the jobs with models. CEOs are rushing to shed headcount in the theory that agents will replace some amount of their workforce. To be clear, I don’t argue the premise of the logic - the question is how much? In order for the true promise being advertised to be achieved the memory problem needs to be solved.

It is easy to fall into the dystopian trap of using simple arithmetic to assume human innovation is dead and all discretionary income in the world will go to zero because it is being eaten by AI agents. I mean, an analyst wrote a pretty naive report and the market dropped like 1,000 points. I have always thought this perspective was incredibly naive. The world sure loves a good story.

But I am still not sure whether we have worked backwards enough from the customer, truly understanding the user experience, to justify solving the memory problems or arguing that AI will replace the human workforce. I see a lot of knees being jerked without too much rational data.

Let me give you another logic exercise, near and dear to my heart. One of the most popular data and AI use cases is “chat with your data.” Basically it’s a chatbot sitting on top of a data mart. Ask it any question, regardless of ambiguity, and it will give you an answer.

As you can imagine, in my current job, I have to make this use case work - a lot - for a lot of different customers. While I am proud to say that my team has come up with some very novel perspectives on this problem, I will not say that has been my biggest learning. No, my biggest learning has been the user experience expectation divide between the AI industry and the actual customer.

Stay with me a moment, and I will tie this back to memory. When I first started on this problem area, the priority seemed to be insight quality. While human customers wanted answers quickly, they would sacrifice latency for seemingly amazing insights and richness of answers. This matched what I was seeing being touted by the AI industry. It takes Claude Code hours to make a truly meaningful application. Open AI’s own datalake explorer takes minutes to run queries. Deep research agents are asynchronous by default.

We don’t care how long it takes, it should be able to answer highly ambiguous queries with super intelligent answers on even the most obscure data in the data mart.

But then as my journey continued, this tradeoff sharply started to move towards latency. It was funny, every senior leader would say at the beginning of the engagement: “we don’t care about latency.” Give it a day or two and “we care about latency, can you get answers back in less than 10 seconds?”

Latency came at the expense of intelligence, while requiring a ton of code and tools to support it. After all, LLMs generate correct SQL on the first attempt only about 40% of the time. And each attempt costs roughly 5-13 seconds.

So how does this track back to memory? Well, if you take the position that LLMs are meant to replace humans at 10% of the cost, latency is not the critical objective. Using the example I just gave, I dare you to evalutate how quickly, accurately, and correctly a human generates SQL. If memory is truly a priority to make agents as smart, innovative, and creative as the human role they are replacing - then latency isn’t a factor.

But yet it is. What this tells me is that the model industry has still not understood the ideal customer experience. I am not entirely sure that the user or even the person paying for the AI tokens truly can articualte their priorities or help make this tradeoff yet. And frankly, until they can, any displacement or disruption (e.g., layoffs in anticipation of agents replacing the workforce, bringing app development in house) is premature.

Taking a step back, I don’t think the model providers, the customers, or the integrators have yet figured out what they want. Model providers have made their definition of success replacing human skilled labor - “superintelligence.” The customers seem more confused than ever. We are asking them to switch from a preference towards low latency user experiences, with high degrees of availabilty and accuracy, to the user experience of talking to a service being benchmarked by it’s ability to be, well, human. This is not good.

Perhaps this is why we have seen the industry stall out a bit in terms of capability. The danger is that the marketing is getting ahead of the capability. CEOs are making a bet that AI will replace some part of their workforce.

Do you want to work on solving it?